|

|

马上注册,结交更多好友,享用更多功能^_^

您需要 登录 才可以下载或查看,没有账号?立即注册

x

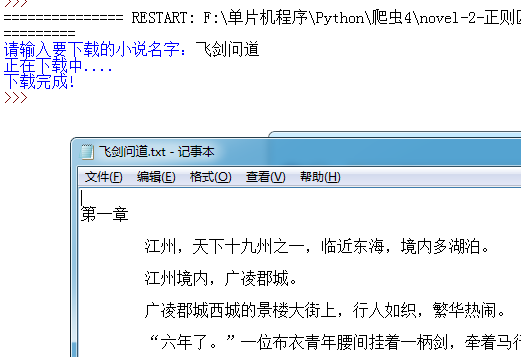

写了个下载小说的爬虫,可以下载大部分排行榜靠前的小说

效果图如下:

代码如下

- import urllib.request

- import urllib.parse

- import re

- import time

- from bs4 import BeautifulSoup as bs

- url_1 = 'http://www.23us.so/top/allvisit_'

- #打开网页

- def url_open(url):

- req = urllib.request.Request(url)

- req.add_header('User-Agent', 'Mozilla/5.0 (Windows NT 6.1; Win64; x64; rv:57.0)\

- Gecko/20100101 Firefox/57.0')

- response = urllib.request.urlopen(req, timeout=10)

- html = response.read().decode('utf-8')

- response.close()

- return html

- def get_url(html, word):

- search_index = word+'</a></td>(\s*?)<td class="\w"><a href="(.*?)"'

- p = re.compile(search_index)

- m = p.search(html)

- try:

- novel_address = m.group(2)

- return novel_address

- except:

- return None

-

- def get_chapters(html):

- chapter_list = []

- p = re.compile(r'a href="(.{50,70}?)">(第(.+?)章)(.*?)</a>')

- chapter_list = p.findall(html)

- return chapter_list

- def download_chapters(chapter_list, word):

- file_name = word + '.txt'

- # p = re.compile(r'<dd id="contents">(.*?)</dd>', re.DOTALL)

- with open(file_name, 'w', encoding='utf-8') as f:

- for each in chapter_list:

- html = url_open(each[0])

- soup = bs(html, "html.parser")

- f.write('\n' +each[1] + '\n')

- temp = soup.find_all('dd', id='contents')

- f.write(temp[0].text)

-

-

- def download_novel():

- word = input("请输入要下载的小说名字:")

- i = 0

- while True:

- i += 1

- novel_search = url_1 + str(i) + '.html'

- #获取小说网页

- html = url_open(novel_search)

- novel_url = get_url(html, word)

- #获取章节网页

- if novel_url == None:

- if i>50:

- print('您需要的小说暂时无法下载')

- break

- else:

- print('正在下载中....')

- chapter_list = get_chapters(url_open(novel_url))

- download_chapters(chapter_list,word)

- print('下载完成!')

- break

-

- if __name__ == '__main__':

- download_novel()

-

代码比较简单,更多功能有待完善 |

评分

-

查看全部评分

|

( 粤ICP备18085999号-1 | 粤公网安备 44051102000585号)

( 粤ICP备18085999号-1 | 粤公网安备 44051102000585号)