|

|

马上注册,结交更多好友,享用更多功能^_^

您需要 登录 才可以下载或查看,没有账号?立即注册

x

上回其实已经问过了,由@SixPy 大神给了一个简单的解答。当时觉得会了,所以就没再问。但这两天照之前的想法试了一下,发现失败了。

http://bbs.fishc.com/forum.php?m ... peid%26typeid%3D393

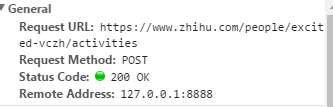

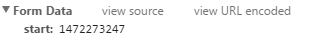

之前是发现每次点击“更多”的时候会POST一个start,这个start的值是他的主页上最后一条记录的'data-time'。

url

start

于是我就想着找到最后一个data-time,POST到那个url就行了,然后就写了如下代码

- import urllib.request as ur

- import urllib.parse as up

- from http.cookiejar import CookieJar

- import re

- from bs4 import BeautifulSoup

- import os

- import time

- url = 'http://www.zhihu.com/'

- #构造opener

- cj = CookieJar()

- opener = ur.build_opener(ur.HTTPCookieProcessor(cj))

- opener.addheader = [('User-Agent','Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/51.0.2704.106 Safari/537.36')]

- #获取_xsrf

- def get_xsrf():

- url = 'http://www.zhihu.com/'

- response = opener.open(url)

- html = response.read()

- soup = BeautifulSoup(html,'html.parser')

- val = soup.find(attrs = {'name':'_xsrf'})

- value = val['value']

- return value

- #登录知乎

- url2 = ''.join([url,'login/email'])

- data = {}

- data['_xsrf'] = get_xsrf()

- data['password'] = 密码

- data['captcha_type'] = 'cn'

- data['remember_me'] = 'true'

- data['email'] = 账号

- response2 = opener.open(url2,up.urlencode(data).encode('utf-8'))

- #轮子哥主页!

- url_lunzi = 'https://www.zhihu.com/people/excited-vczh'

- response3 = opener.open(url_lunzi)

- html2 = response3.read().decode('utf-8')

- soup2 = BeautifulSoup(html2,'html.parser')

- #寻找他赞过的答案

- answer = []

- while 1:

- if len(answer) < 30:

- a = soup2.find_all(name='div',

- class_="zm-profile-section-item zm-item clearfix")

- start = a[-1]['data-time']

- url_more = 'https://www.zhihu.com/people/excited-vczh/activities'

- data_more = {'start':start}

- response3 = opener.open(url_more, up.urlencode(data_more).encode('utf-8'))

- html3 = response3.read().decode('utf-8')

- soup3 = BeautifulSoup(html3,'html.parser')

- allanswer = soup3.find_all(name = 'div',

- class_ = "zm-profile-section-main zm-profile-section-activity-main zm-profile-activity-page-item-main",

- )

- for i in range(len(allanswer)-1,-1,-1):

- if allanswer[i] in answer:

- del allanswer[i]

- elif '赞同了回答' not in allanswer[i].span.next_sibling:

- del allanswer[i]

- answer = allanswer

- else:

- break

- #下载图片

- route = 'D:\\vczh_pic'

- try:

- os.mkdir(route)

- except:

- pass

- finally:

- os.chdir(route)

- for each in answer:

- link = each.span.next_sibling.next_sibling['href']

- link = ''.join(['http://www.zhihu.com',link])

- res = opener.open(link).read().decode('utf-8')

- soup = BeautifulSoup(res,'html.parser')

- pic = soup.find_all(attrs = {'data-rawwidth':'1280','class':'origin_image zh-lightbox-thumb lazy'})

- for each_pic in pic:

- pic_name = re.sub(r'https://pic\d\.zhimg\.com/','',each_pic['data-actualsrc'])

- with open(pic_name,'wb') as f:

- src = each_pic['data-actualsrc']

- pic_res = ur.urlopen(src).read()

- f.write(pic_res)

这个代码我分段测试过,登录和筛选记录以及下载图片都是正常的,问题还是出在了想点击“更多”时

下面是出的问题

- Traceback (most recent call last):

- File "C:\Users\Administrator\Desktop\轮带逛.py", line 71, in <module>

- response3 = opener.open(url_more, up.urlencode(data_more).encode('utf-8'))

- File "D:\Python34\lib\urllib\request.py", line 469, in open

- response = meth(req, response)

- File "D:\Python34\lib\urllib\request.py", line 579, in http_response

- 'http', request, response, code, msg, hdrs)

- File "D:\Python34\lib\urllib\request.py", line 507, in error

- return self._call_chain(*args)

- File "D:\Python34\lib\urllib\request.py", line 441, in _call_chain

- result = func(*args)

- File "D:\Python34\lib\urllib\request.py", line 587, in http_error_default

- raise HTTPError(req.full_url, code, msg, hdrs, fp)

- urllib.error.HTTPError: HTTP Error 403: Forbidden

实在是束手无策了,难道必须要用selenium才行吗?应该怎么修改呢?

|

|

( 粤ICP备18085999号-1 | 粤公网安备 44051102000585号)

( 粤ICP备18085999号-1 | 粤公网安备 44051102000585号)